[Lab] AWS Lambda LLRT vs Node.js

![[Lab] AWS Lambda LLRT vs Node.js](/_next/image?url=https%3A%2F%2Fcdn.hashnode.com%2Fres%2Fhashnode%2Fimage%2Fupload%2Fv1715799253631%2F9f12f208-484e-499e-9324-ec4f37855660.png&w=3840&q=75)

Cloud Architect/Engineer with leadership and communication skills. Ownership of the entire lifecycle of a project (SDLC), and motivated to continue growing in Cloud and automation. Passionate about new technologies, responsible and enthusiastic collaborator dedicated to streamline processes and eciently solve project requirements.

Introduction

AWS Lambda is a serverless compute service that runs code in response to events and automatically manages the underlying resources for you. You can use AWS Lambda to extend other AWS services with custom logic, or create your own backend services that operate at AWS scale, security and the main feature considered for this lab, performance.

One of the biggest challenges that is common among AWS Lambda and serverless computing is the Performance. Unlike traditional server-based environments where infrastructure resources can be provisioned and fine-tuned to meet specific performance requirements, serverless platforms like Lambda abstract away much of the underlying infrastructure management, leaving less control over performance optimization in the hands of developers. Additionally, as serverless architectures rely heavily on event-driven processing and pay-per-execution pricing models, optimizing performance becomes crucial for minimizing costs, ensuring responsive user experiences, and meeting stringent latency requirements.

What is Lambda LLRT?

Warning

LLRT is an experimental package. It is subject to change and intended only for evaluation purposes.

AWS has open-sourced its JavaScript runtime, called LLRT (Low Latency Runtime), an experimental, lightweight JavaScript runtime designed to address the growing demand for fast and efficient Serverless applications.

LLRT is designed to address the growing demand for fast and efficient Serverless applications. LLRT offers up to over 10x faster startup and up to 2x overall lower cost compared to other JavaScript runtimes running on AWS Lambda. It's built in Rust, utilizing QuickJS as JavaScript engine, ensuring efficient memory usage and swift startup.

Real-Time processing or data transformation are the main use cases for adopting LLRT for future projects. LLRT Enables a focus on critical tasks like event-driven processing or streaming data, while seamless integration with other AWS services ensures rapid response times.

Key features of LLRT

Faster Starup Times: Over 10x faster startup compared to other JavaScript runtimes running on AWS Lambda.

Cost optimization: Up to 2x overall lower cost compared to other runtimes. By optimizing memory usage and reducing startup time, Lambda LLRT helps minimize the cost of running serverless workloads.

Built on Rust: Improves performance, reduced cold start times, memory efficiency, enhanced concurrency and safety.

QuickJS Engine: Lightweight and efficient JavaScript engine written in C. Ideal for fast execution, efficient memory usage, and seamless integration for embedding JavaScript in AWS Lambda.

No JIT compiler: unlike NodeJs, Bun & Deno, LLRT not incorporates JIT compiler. That contributes to reduce system complexity and runtime size. Without the JIT overhead, CPU and memory resources can be more efficiently allocated.

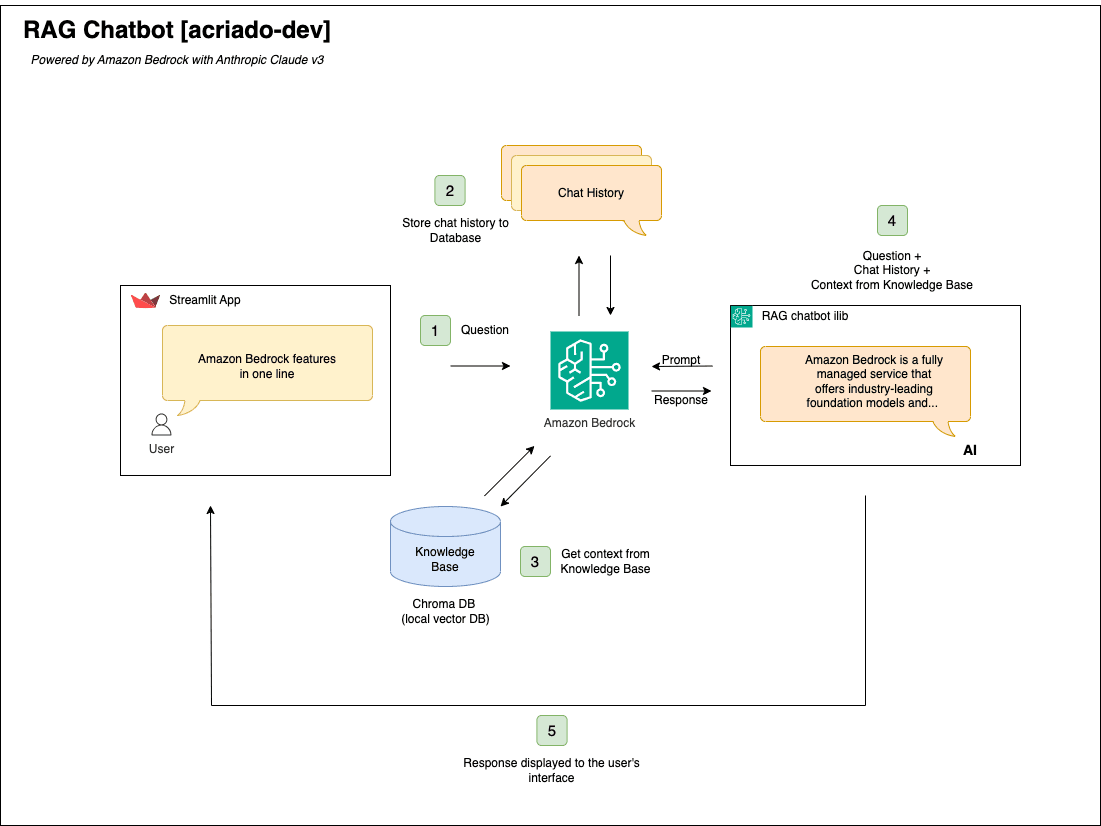

Overview

The goal of this lab is to conduct a comparative analysis between AWS Lambda's Low Latency Runtime (LLRT) and traditional Node.js runtime, focusing on their performance and efficiency in real-world serverless applications. By deploying identical functions with LLRT and Node.js, with the aim to evaluate their respective startup times, execution speeds, and resource consumption.

The previous results of performance profiling by AWS Labs, available on GitHub, offer valuable benchmarks and insights:

- LLRT - DynamoDB Put, ARM, 128MB:

- Node.js 20 - DynamoDB Put, ARM, 128MB:

Lab

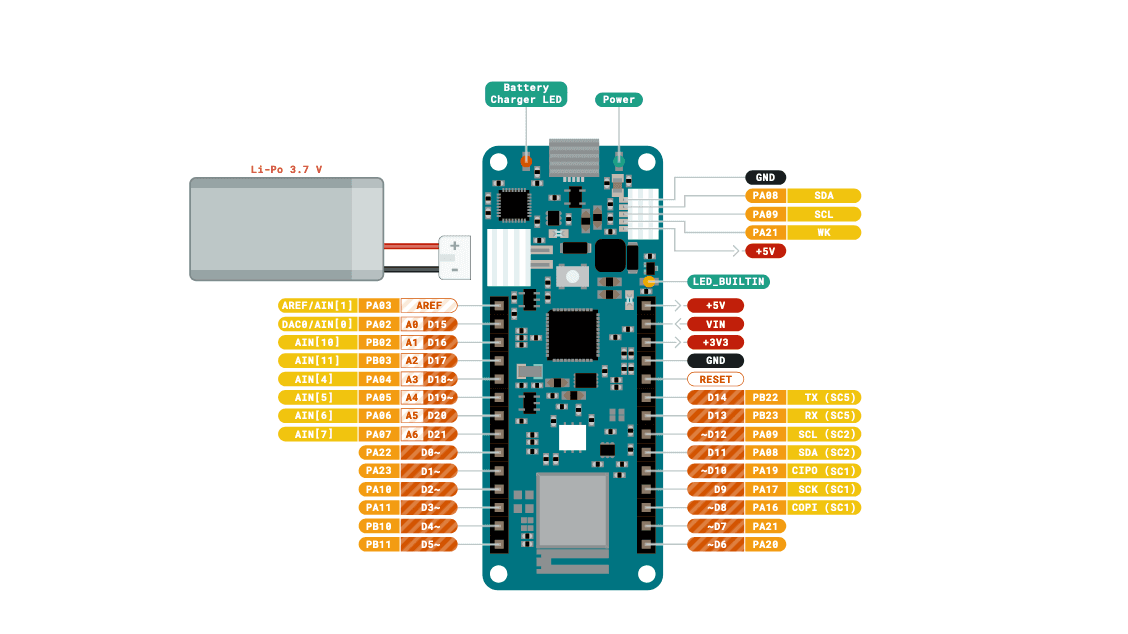

The lab is composed by 2 Lambda functions "llrt-put-item" and "nodejs-put-item", both designed to put any received event into a DynamoDB table named "llrt-sam-table".

Implemented using AWS SAM, the project leverages AWS Lambda Power Tuning for performance profiling and benchmarking. While resembling the default benchmark by AWS Labs, all code developed is accessible on my personal GitHub repository, facilitating easy replication and further exploration of the lab's results:

https://github.com/acriado-otc/example-aws-lambda-llrt

Benchmarking: LLRT vs Node.js

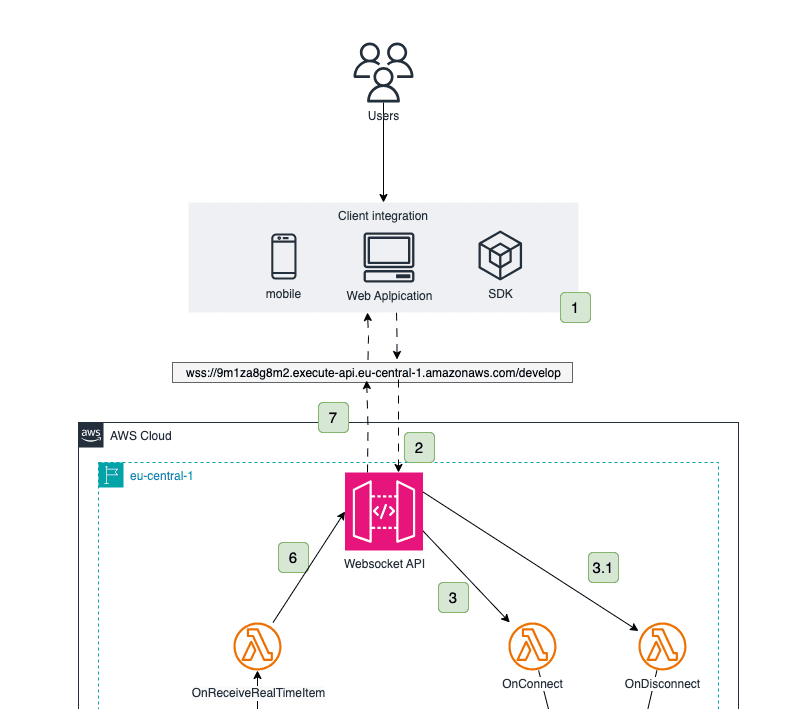

To benchmark the lab, AWS Lambda Power Tuning has been chosen as a open-source tool developed by AWS Labs. AWS Lambda Power Tuning is a state machine powered by AWS Step Functions that will helps us to get results for both lab's Lambda functions and get results for cost and/or performance in a data-driven way.

While you can manually run tests on functions by selecting different memory allocations and measuring the time taken to complete, the AWS Lambda Power Tuning tool allows us to automate the process.

The state machine is designed to be easy to deploy and fast to execute. Also, it's language agnostic.

Deployment

To deploy AWS Lambda Power Tuning, clone the official repository and choose the preferred deployment method. In my case I used SAM cli for simplicity, but I highly recommend to use AWS CDK for IaC deployments:

https://github.com/alexcasalboni/aws-lambda-power-tuning

Executing the State Machine

Independently of how you've deployed the state machine, you can execute it in a few different ways. Programmatically, using the AWS CLI, or AWS SDK. In the case of our lab, we are going to execute it manually, using the AWS Step Functions web console.

- Once properly deployed, You will find the new state machine in the Step Functions Console in the AWS account and region defined for the lab:

Find it and click "Start execution".

A descriptive name and input should be provided.

We are going to execute llrt-put-item first, that's the input used**:**

{

"lambdaARN": "arn:aws:lambda:eu-west-1:XXXXXXXXXXX:function:llrt-sam-LlrtPutItemFunction-42aAa2of5wqn",

"powerValues": [

128,

256,

512,

1024

],

"num": 500,

"payload": {"message": "hello llrt lambda"},

"parallelInvocation": true,

"strategy": "speed"

}

All other fields should remain by default. Finally, find it and click "Start execution".

After some seconds/minutes the Execution should have the status "Succeded"

Repeat the same process for the nodejs-put-item lambda function. The unique field to change from the input should be the function's ARN and optionally the payload.

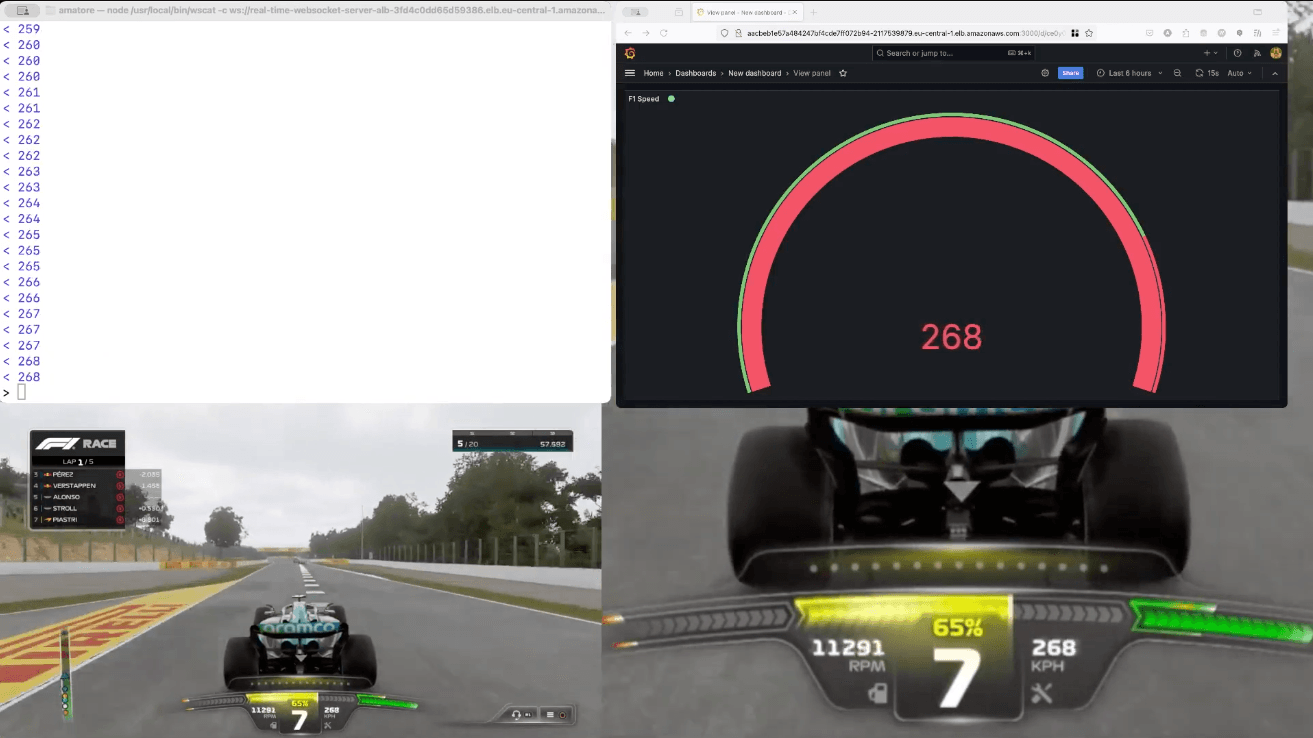

While running each execution, we can see the status/progress of the step function execution with the graph view:

Results and Analysis

In order to get accurate results several executions have been made for each Lambda function, with 'speed' strategy. Next we are presenting the results for each Lambda separately, and a final comparison:

- LLRT Lambda function:

{

"power": 1024,

"cost": 9.519999999999999e-8,

"duration": 6.25,

"stateMachine": {

"executionCost": 0.00025,

"lambdaCost": 0.0001901263,

"visualization": "https://lambda-power-tuning.show/#gAAAAQACAAQ=;REREQWbm5kIREdFAAADIQA==;ata9Muy90zTBcEwzwXDMMw=="

}

}

- Nodejs

{

"power": 1024,

"cost": 1.512e-7,

"duration": 8.666666666666666,

"stateMachine": {

"executionCost": 0.00025,

"lambdaCost": 0.00025384590000000003,

"visualization": "https://lambda-power-tuning.show/#gAAAAQACAAQ=;d7fdQ7y7iEKamatBq6oKQQ==;Ckp6Nc+VmzRwbUY0ilkiNA=="

}

}

- Comparison

After several executions of the AWS Power Tuning step function. It's clear that both lambda functions has a similar performance for 1024MB and 512MB of memory allocation. The huge difference appears in the interval from 128MB to 256MB, where LLRT is more suitable option and should be considered for small functions.

In terms of cost, for LLRT 128MB is the cheaper average execution, and 256MB the wrost, meanwhile for NodeJs function the wrost cost 128MB, just the opposite of LLRT. However, it's important to remark that for this laboratoy, the criteria applied for the benchmark has been 'speed'. To get more accurate cost results should be executed with 'cost' strategy accordingly.

Conclusion

LLRT clearly can add advantages for some projects, such as faster startup times and potential cost savings, but it currently lacks the stability, support, and real-world testing required to be considered for production use.

For developers working with smaller serverless functions that prioritize rapid response times and efficient resource utilization, LLRT is an alternative to traditional Lambda runtimes. However, it's essential to evaluate carefully LLRT and consider the specific requirements of your application before.

As LLRT continues to evolve and mature, it's adoption will increase for sure, and progressively a refinement of this runtime for latency-sensitive use cases will be provided by AWS. In the meantime, let's monitor LLRT's development and wait for news, specially for production environments.